I spent some time getting a ReAct LLM (Yao et al. 2022) to autonomously answer questions. Improving performance turned out to require some changes that I haven't seen discussed in my technical haunts. Through failure case analysis and targeted interventions, I was able to drive performance from on the order of 1% to north of 80% over a couple weeks.

ReAct ("Reasoning and Acting") builds on chain-of-thought. It gives the LLM more structure around thinking and acting, which reduces hallucination and error propagation. With ReAct, we use a straightforward LLM prompt that describes some actions that the agent can take to get external information. The prompt also explains that we expect a final structure of repeated Thought / Action / Action Input / Observation blocks. The LLM then constructs a structured text that follows those rules in collaboration with a backend system. The backend system orchestrates and keeps the LLM on track. In particular, the backend parses the LLM outputs, reaches out to the Action systems to produce the Observations, and writes the prompt for the LLM.

I'll give a concrete example (text taken from the langchain docs). In the example, I've colored the sources of the text. I give user input in dark orange, the lines from the coordinating backend system in blue, and the text generated by the LLM in red with grey background:

Question: Who is Leo DiCaprio's girlfriend? What is her current age raised to

the 0.43 power?

Thought: I need to find out who Leo DiCaprio's girlfriend is and then calculate

her age raised to the 0.43 power.

Action: Search

Action Input: "Leo DiCaprio girlfriend"

Observation: Camila Morrone

Thought: I need to find out Camila Morrone's age

Action: Search

Action Input: "Camila Morrone age"

Observation: 25 years

Thought: I need to calculate 25 raised to the 0.43 power

Action: Calculator

Action Input: 25^0.43

Observation: Answer: 3.991298452658078

Thought: I now know the final answer

Final Answer: Camila Morrone is Leo DiCaprio's girlfriend and her current age

raised to the 0.43 power is 3.991298452658078.

Each time control returns to the backend, the backend parses what the LLM produced. It throws away everything that isn't immediately relevant (like any oh-so-eagerly-hallucinated Observations). Then the backend constructs a new, slightly longer prompt with a real Observation, and prompts the LLM to complete it a bit further. Eventually the LLM produces a Final Answer, which the backend parses and returns to the user.

So, that's ReAct. It didn't work very well for me out-of-the-box. The langchain implementation plus a dozen possible actions produced sub-5% performance on questions it really should have been able to answer.

So, I embarked on a ReAct performance improvement quest. What worked for me, in order from least surprising to most surprising to me, was:

- Prompt engineering the action descriptions to improve dispatch. First-pass docstrings often are flawed. We all have this problem — the docstring writer has high context but the reader does not, and what is salient to the writer isn't always salient to the reader. So, I made sure each available action was described in one sentence starting with a verb. Then I re-scoped each "action" by how clearly I could write a user-facing description for the idea (rather than by how APIs are broken apart).

- Making parameterization errors visible to enable recovery. I found the LLM often chose a poor

Action Input, which caused the backend to receive exceptions when it tried to execute the LLM's instructions. I wanted to make the "bad parameterization" problem more tangible to the LLM. I took this on in three ways: (1) with a priori clues — I described the format of the input in the description (e.g., is it an integer, enum, string, ...), (2) with data typing (e.g., does the UUID that the LLM wants to pass refer to an object of the appropriate type for this action), (3) with a posteriori clues — I updated the backend to include meaningful error messages as theObservationfor bad parameterizations, so the LLM would get another crack at it. - Removing all "early stop" actions to encourage actually trying. LangChain allows you to define "early stop" actions. If the LLM picks one of these actions, the entire reasoning chain gets aborted. I found that the LLM would often pick an early stop action as its very first action. So, I strongly encouraged it to always try to answer by removing all early stop actions. It is still possible for the system to go immediately from the

Questioninto aFinal Answerof "I have no idea what to do to answer this". But in practice the system is actually trying now, when it often wasn't before. - Dropping old

Thoughtsso it can't confuse its guesses for facts. I was semi-frequently seeing the LLM hypothesize a wild idea, decide on an appropriate action and input to test that wild idea, receive the right answer, and then write aFinal Actionthat treated the wild idea as if it were a fact. This behavior is understandable, and it is also very bad. So, now the LLM doesn't get to see its previousThoughts. - Actively seeding

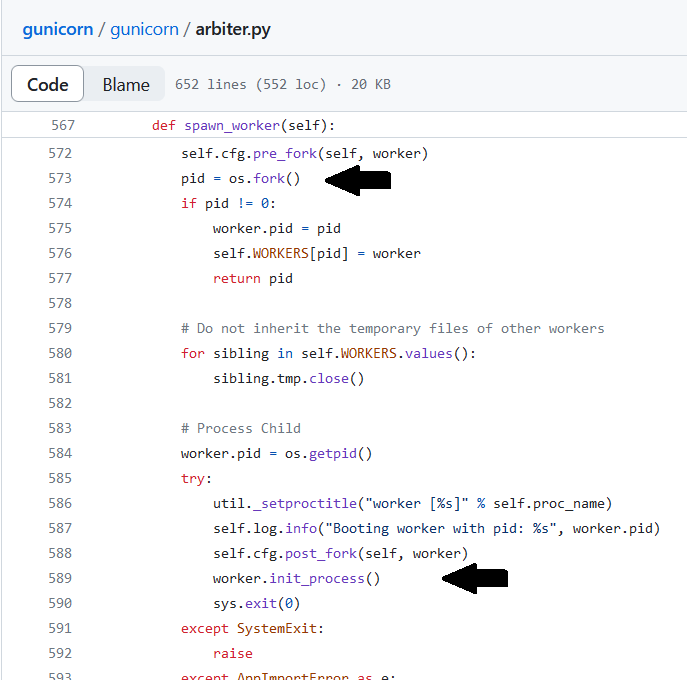

Thoughtsto recover from unparseable LLM responses. Sometimes the LLM thinks the most likely next token sequence is nothing, and we get an empty string as the completion. Sometimes it doesn't generate text in the required format. Sometimes it goes off the rails in some other way. All of these break parsing. In the LangChain case, the backend has no effective way to get the whole agent back on track again, so it raises an exception and exits. Ideally, though, it would be able to recover. So, when the LLM gives garbage responses, I've started puttingThoughtsin its head. Extending the system prompt slightly past the colon with an innocuous phrase — to something likeThought: I need to decide on an Action and Action Input— is enough to break the LLM free. This "seeding its thoughts" approach works well even when the temperature is turned down to 0.0 (with maximal determinism during text generation), because the approach ensures a slightly different prompt from the one that failed.

With these changes, I was able to raise the performance from <5% to on the order of 70-90% pretty quickly.

I suppose in addition to "keep thinking; a simpler solution will come", the wider lesson that was reinforced for me here is that popular ideas can easily suck up more than their share of oxygen in the public conversation (even good ideas like prompt engineering!). "Don't stop with what's popular; make sure everyone is looking at the real behaviors" is my takeaway for myself.